Machines do not usually run smoothly when things get rough. Factories and workplaces pack the air full of grime, wetness, grease, and even fumes. When heat shifts happen, gear does not respond well.

If shields aren’t strong enough, breakdowns show up fast. Trouble like that brings danger along with expensive delays. When it comes to cutting down on dangers, people rely on set rules for casing.

Protection levels against things like dust or moisture come straight from those guidelines.

A common approach you will see in North America is built around NEMA labels. Picking the right box for electrical gear often depends on what the NEMA number says.

Safety gets a boost, while durability over time climbs too. Looking into why NEMA ratings exist shapes how we understand them.

This article reviews the purpose of NEMA ratings, common enclosure types, testing methods, and typical applications.

What is a NEMA rating?

The National Electrical Manufacturers Association is the meaning behind the acronym NEMA. Based in the U.S., it functions as a group that sets standards. Across North America, many rely on these guidelines every day.

When it comes to electrical boxes, they lay out what each must handle. Protection against the surroundings is exactly what those rules cover. How something is built or what it is made of does not matter.

Passing certain environmental checks is required for any housing unit. Dust, water, and how well it resists rust are measured during these trials. When a case passes, it earns one clear NEMA classification.

Purpose of NEMA Ratings

When it rains, the metal parts inside the gear can rust quickly. Inside machines, tiny particles pile up slowly but block cooling paths.

Water sneaks into circuits where it should never go. These boxes keep wires safe from what surrounds them.

A number on a label tells what kind of space it fits. Picking right means fewer surprises when putting things together.

Getting it correct keeps people safe while machines run without issues. Wrong choices can lead to trouble nobody wants later.

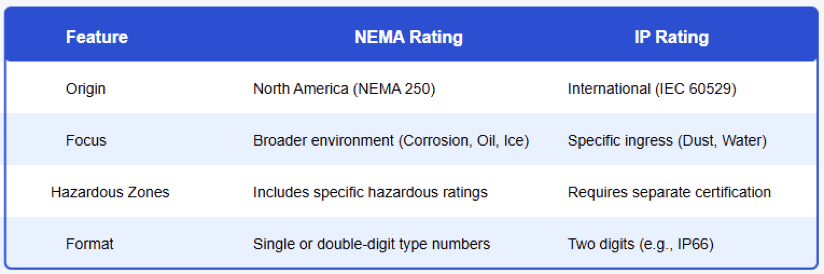

NEMA Ratings Compared to IP Ratings

Under different skies, IP codes measure resistance to dust and liquids. These codes come from global IEC rules.

Instead of just particles and moisture, NEMA looks wider. Solids sneaking in? Water dripping? Both systems track that. Yet NEMA also weighs things like corrosion or oil exposure.

Ice can build up, just like rust or contact with oil might happen. Not the same thing, those two setups.

Switching straight from one to the other? Doesn’t work that way. Rough estimates need a light touch during the comparison.

Comparison table of common NEMA ratings and approximate IP equivalents

Common NEMA Enclosure Ratings

NEMA 1

Inside buildings, NEMA 1 housings keep people safe by preventing unintended touches. Blocking big items comes next. Protection stops there; dust slips through.

Water finds its way in, too, and is built only for it indoors. Fresh spots without dust often host them. Places like desks, where decisions happen, see these too.

NEMA 3 and 3R

Outside boxes called NEMA 3 keep gear safe from the weather. Rain, snow, even icy drops cannot get through easily.

Dust flying in strong winds stays out too. When it comes to just rainfall, NEMA 3R models hold that off well.

Openings for air flow are allowed here, but they do not block dust completely. Outdoors, you will often spot both kinds. Junction boxes and gear for services tend to use them instead.

NEMA 4 and 4X

Built tough, NEMA 4 enclosures block dust plus keep out splashed water. Water shot from a hose will not get inside either.

What sets NEMA 4X apart? It fights rust just as hard. Protection goes further when moisture meets metal.

Fully sealed against dust and water, every NEMA 4 safeguard is built right in. Tough settings demand this kind of housing.

Places like food plants choose these boxes. So do salt-heavy seaside spots. Even factories handling acids or sewage count on their strength.

NEMA 6 and 6P

Underwater conditions? These housings handle that, but only up to certain limits. How deep and how long rules define it clearly. Not a speck of dust gets inside either. Staying submerged much longer?

That’s where 6P steps in. Inside, rust stands little chance. Tough settings put these boxes to work. Think deep below ground where damp clings. Out at sea, they hold up just the same.

NEMA 12 and 13

Inside industrial spaces, NEMA 12 cases keep out dust, grime, and stray fibers. Oil that drips or sprays will not easily get through.

Factories tend to rely on these housings quite a bit. Equipment control setups frequently include them.

Though built for inside settings, they hold up well when mess piles up nearby. NEMA 13 boxes hold up better against oil.

When coolants show up, they keep their guard. Lubricants try to break through, but there is still no entry. Factories with heavy tools often choose these.

Testing Methods for NEMA Ratings

Testing decides NEMA ratings, and under real-like setups, devices face trial runs. Each check follows set steps to confirm function.

Sprayed water or hose streams challenge resistance. Particles float into samples during dust trials.

Meanwhile, salty mist coats objects in corrosion checks. Frost builds up during certain rating checks.

After that, they look at how strong the frame holds together. Every single rule needs to pass.

Illustration showing water, dust, and corrosion testing methods

NEMA Ratings Matter in System Design

A wrong box choice brings trouble fast. Reliability takes a hit right away because of it. Safety slips when shields are weak, and equipment quits early without proper cover. Insulation suffers once dampness gets in. Electronics fail after wetness spreads.

Heat builds up where dust collects. Repairs pile on when breakdowns start. Just piling on extra safeguards brings its own headaches. Money goes up, yet safety stays flat. Putting things together gets trickier than needed.

Guidelines like NEMA rankings guide smarter choices. Picking the right shield level? That is where they lend clarity.

Uses for NEMA Rated Enclosures

Out in the field, you will spot NEMA-rated enclosures showing up everywhere. Factories? They lean on NEMA 12 a lot.

Some go one step further with NEMA 13 instead. When it’s outside work, NEMA 3R tends to handle the job.

Wash-down areas often need NEMA 4 enclosures. In food plants, you’ll usually see a demand for NEMA 4X instead.

Different settings bring different demands. Picking the right housing gets easier thanks to NEMA classifications.

Diagram showing industrial environments with typical NEMA enclosure ratings

Wrong About NEMA Ratings

Just because a NEMA rating is higher does not mean it is right every time. Cost climbs along with the number; so does heft. Not every job needs that much shielding. What is the box made of? That detail stays out of the rating entirely.

A plastic case might carry the same mark as one built from steel. What something can do matters more than how it is built. People mix up NEMA and IP ratings all the time. One does not replace the other. Knowing what sets them apart helps avoid wrong choices.

NEMA Ratings in IIoT And Industry Four

Out there on factory floors, machines now talk through sensors and linked gadgets. Not just a trend, this ties into bigger networks like IIoT and the so-called fourth wave of industry. To keep these brains running, boxes around them need to be tough.

When dust, moisture, or impacts show up, strong enclosures stand in the way. A higher number on that NEMA tag means less chance of signals dropping out.

Through storms inside the plant or constant machine vibration, the gear stays live and reporting.

Conclusion

This article details the meaning of NEMA ratings and their role in enclosure selection. What lies behind NEMA labels becomes clear here.

These codes shape how enclosures stand up to their surroundings. Protection against dust shows up early in the criteria.

Water exposure matters just as much, sometimes more. Oil contact gets its own spot on the list, too.

Corrosion resistance plays a part when environments turn harsh. Going underwater? Some models handle that without fail.

Folks across North America rely on these ratings every day. Because they help build electrical systems that run without surprise failures. When chosen right, hazards drop off equipment stays online longer.

For workers who handle real-world installations, knowing NEMA labels isn’t optional. Folks who build and fix machines use these tools every single day.

When used the right way, they keep systems running smoothly over time. This article details the meaning of NEMA ratings and their role in enclosure selection.

FAQ: What is a NEMA rating?

What is a NEMA rating?

NEMA is the National Electrical Manufacturers Association. It is an organization setting performance criteria for electrical enclosures suitable for use in industrial and commercial settings.

Do NEMA ratings include protection against corrosion?

Yes. Some ratings, like NEMA 4X, call for corrosion resistance testing in addition to water and dust protection.

Does the material of the enclosure affect the NEMA rating?

The rating is derived from performance rather than material only. Often employed to satisfy greater NEMA standards, materials like stainless steel or fiberglass are

Do NEMA ratings correspond to IP ratings?

No. Different criteria define NEMA and IP ratings. NEMA ratings take into account other elements, including oil exposure and corrosion resistance.